Data Contract CLI

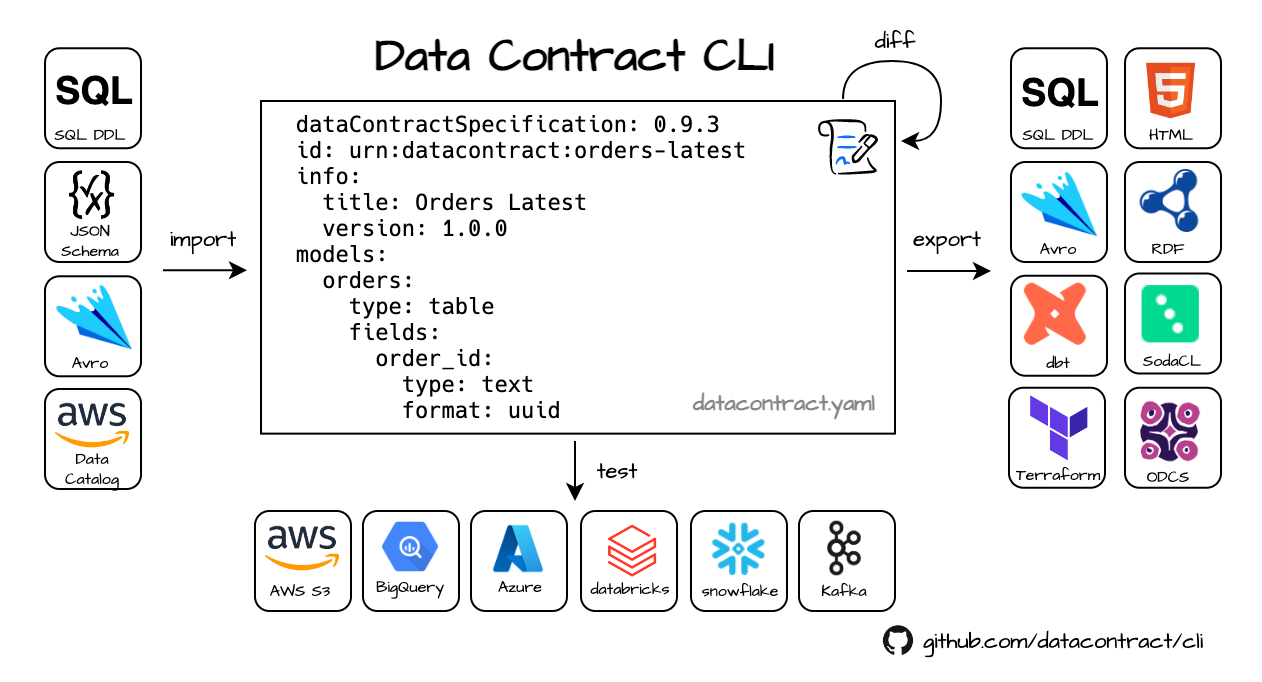

The datacontract CLI is an open-source command-line tool for working with data contracts.

It natively supports the Open Data Contract Standard to lint data contracts, connect to data sources and execute schema and quality tests, and export to different formats.

The tool is written in Python.

It can be used as a standalone CLI tool, in a CI/CD pipeline, or directly as a Python library.

Quick navigation: Documentation · Best Practices · Custom Export and Import · Development Setup

Getting started

Let’s look at this data contract: https://datacontract.com/orders-v1.odcs.yaml

We have a servers section with endpoint details to a Postgres database, schema for the structure and semantics of the data, service levels and quality attributes that describe the expected freshness and number of rows.

This data contract contains all information to connect to the database and check that the actual data meets the defined schema specification and quality expectations. We can use this information to test if the actual data product is compliant to the data contract.

Let’s use uv to install the CLI (or use the Docker image),

$ uv tool install --python python3.11 --upgrade 'datacontract-cli[all]'

Now, let’s run the tests:

$ export DATACONTRACT_POSTGRES_USERNAME=datacontract_cli.egzhawjonpfweuutedfy

$ export DATACONTRACT_POSTGRES_PASSWORD=jio10JuQfDfl9JCCPdaCCpuZ1YO

$ datacontract test https://datacontract.com/orders-v1.odcs.yaml

# returns:

Testing https://datacontract.com/orders-v1.odcs.yaml

Server: production (type=postgres, host=aws-1-eu-central-2.pooler.supabase.com, port=6543, database=postgres, schema=dp_orders_v1)

╭────────┬──────────────────────────────────────────────────────────┬─────────────────────────┬─────────╮

│ Result │ Check │ Field │ Details │

├────────┼──────────────────────────────────────────────────────────┼─────────────────────────┼─────────┤

│ passed │ Check that field 'line_item_id' is present │ line_items.line_item_id │ │

│ passed │ Check that field line_item_id has type UUID │ line_items.line_item_id │ │

│ passed │ Check that field line_item_id has no missing values │ line_items.line_item_id │ │

│ passed │ Check that field 'order_id' is present │ line_items.order_id │ │

│ passed │ Check that field order_id has type UUID │ line_items.order_id │ │

│ passed │ Check that field 'price' is present │ line_items.price │ │

│ passed │ Check that field price has type INTEGER │ line_items.price │ │

│ passed │ Check that field price has no missing values │ line_items.price │ │

│ passed │ Check that field 'sku' is present │ line_items.sku │ │

│ passed │ Check that field sku has type TEXT │ line_items.sku │ │

│ passed │ Check that field sku has no missing values │ line_items.sku │ │

│ passed │ Check that field 'customer_id' is present │ orders.customer_id │ │

│ passed │ Check that field customer_id has type TEXT │ orders.customer_id │ │

│ passed │ Check that field customer_id has no missing values │ orders.customer_id │ │

│ passed │ Check that field 'order_id' is present │ orders.order_id │ │

│ passed │ Check that field order_id has type UUID │ orders.order_id │ │

│ passed │ Check that field order_id has no missing values │ orders.order_id │ │

│ passed │ Check that unique field order_id has no duplicate values │ orders.order_id │ │

│ passed │ Check that field 'order_status' is present │ orders.order_status │ │

│ passed │ Check that field order_status has type TEXT │ orders.order_status │ │

│ passed │ Check that field 'order_timestamp' is present │ orders.order_timestamp │ │

│ passed │ Check that field order_timestamp has type TIMESTAMPTZ │ orders.order_timestamp │ │

│ passed │ Check that field 'order_total' is present │ orders.order_total │ │

│ passed │ Check that field order_total has type INTEGER │ orders.order_total │ │

│ passed │ Check that field order_total has no missing values │ orders.order_total │ │

╰────────┴──────────────────────────────────────────────────────────┴─────────────────────────┴─────────╯

🟢 data contract is valid. Run 25 checks. Took 3.938887 seconds.

Voilà, the CLI tested that the YAML itself is valid, all records comply with the schema, and all quality attributes are met.

We can also use the data contract metadata to export in many formats, e.g., to generate a SQL DDL:

$ datacontract export sql https://datacontract.com/orders-v1.odcs.yaml

# returns:

-- Data Contract: orders

-- SQL Dialect: postgres

CREATE TABLE orders (

order_id None not null primary key,

customer_id text not null,

order_total integer not null,

order_timestamp None,

order_status text

);

CREATE TABLE line_items (

line_item_id None not null primary key,

sku text not null,

price integer not null,

order_id None

);

Or generate an HTML export:

$ datacontract export html --output orders-v1.odcs.html https://datacontract.com/orders-v1.odcs.yaml

Usage

# create a new data contract from example and write it to odcs.yaml

$ datacontract init odcs.yaml

# edit the data contract in the Data Contract Editor (web UI)

$ datacontract edit odcs.yaml

# lint the odcs.yaml and stop after the first validation error (default).

$ datacontract lint odcs.yaml

# show a changelog between two data contracts

$ datacontract changelog v1.odcs.yaml v2.odcs.yaml

# execute schema and quality checks (define credentials as environment variables)

$ datacontract test odcs.yaml

# generate dbt tests from a contract into your dbt project and run `dbt test`

$ datacontract dbt sync orders.odcs.yaml --project-dir ./warehouse

# export data contract as html (other formats: avro, dbt-models, dbt-sources, dbt-staging-sql, jsonschema, odcs, rdf, sql, sodacl, terraform, ...)

$ datacontract export html datacontract.yaml --output odcs.html

# import sql (other formats: avro, glue, bigquery, jsonschema, excel ...)

$ datacontract import sql --source my-ddl.sql --dialect postgres --output odcs.yaml

# import from Excel template

$ datacontract import excel --source odcs.xlsx --output odcs.yaml

# export to Excel template

$ datacontract export excel --output odcs.xlsx odcs.yaml

Programmatic (Python)

from datacontract.data_contract import DataContract

data_contract = DataContract(data_contract_file="odcs.yaml")

run = data_contract.test()

if not run.has_passed():

print("Data quality validation failed.")

# Abort pipeline, alert, or take corrective actions...

How to

- How to integrate Data Contract CLI in your CI/CD pipeline as a GitHub Action

- How to run the Data Contract CLI API to test data contracts with POST requests

- How to run Data Contract CLI in a Databricks pipeline

Installation

Choose the most appropriate installation method for your needs:

uv

The preferred way to install is uv:

uv tool install --python python3.11 --upgrade 'datacontract-cli[all]'

uvx

If you have uv installed, you can run datacontract-cli directly without installing:

uv run --with 'datacontract-cli[all]' datacontract --version

pip

Python 3.10, 3.11, and 3.12 are supported. We recommend using Python 3.11.

python3 -m pip install 'datacontract-cli[all]'

datacontract --version

pip with venv

Typically it is better to install the application in a virtual environment for your projects:

cd my-project

python3.11 -m venv venv

source venv/bin/activate

pip install 'datacontract-cli[all]'

datacontract --version

pipx

pipx installs into an isolated environment.

pipx install 'datacontract-cli[all]'

datacontract --version

Docker

You can also use our Docker image to run the CLI tool. It is also convenient for CI/CD pipelines.

docker pull datacontract/cli

docker run --rm -v ${PWD}:/home/datacontract datacontract/cli

You can create an alias for the Docker command to make it easier to use:

alias datacontract='docker run --rm -v "${PWD}:/home/datacontract" datacontract/cli:latest'

Note: The output of Docker command line messages is limited to 80 columns and may include line breaks. Don’t pipe docker output to files if you want to export code. Use the --output option instead.

Optional Dependencies (Extras)

The CLI tool defines several optional dependencies (also known as extras) that can be installed for using with specific servers types. With all, all server dependencies are included.

uv tool install --python python3.11 --upgrade 'datacontract-cli[all]'

A list of available extras:

| Dependency | Installation Command |

|---|---|

| Amazon Athena | pip install datacontract-cli[athena] |

| Avro Support | pip install datacontract-cli[avro] |

| Azure Integration | pip install datacontract-cli[azure] |

| Google BigQuery | pip install datacontract-cli[bigquery] |

| CSV | pip install datacontract-cli[csv] |

| Databricks Integration | pip install datacontract-cli[databricks] |

| DBML | pip install datacontract-cli[dbml] |

| DuckDB (local/S3/GCS/Azure file testing) | pip install datacontract-cli[duckdb] |

| Excel | pip install datacontract-cli[excel] |

| GCS Integration | pip install datacontract-cli[gcs] |

| Iceberg | pip install datacontract-cli[iceberg] |

| Impala | pip install datacontract-cli[impala] |

| Kafka Integration | pip install datacontract-cli[kafka] |

| MySQL Integration | pip install datacontract-cli[mysql] |

| Oracle | pip install datacontract-cli[oracle] |

| Parquet | pip install datacontract-cli[parquet] |

| PostgreSQL Integration | pip install datacontract-cli[postgres] |

| protobuf | pip install datacontract-cli[protobuf] |

| RDF | pip install datacontract-cli[rdf] |

| Amazon Redshift | pip install datacontract-cli[redshift] |

| S3 Integration | pip install datacontract-cli[s3] |

| Snowflake Integration | pip install datacontract-cli[snowflake] |

| Microsoft SQL Server | pip install datacontract-cli[sqlserver] |

| Trino | pip install datacontract-cli[trino] |

| API (run as web server) | pip install datacontract-cli[api] |

Documentation

Commands

init

Usage: datacontract init [OPTIONS] [LOCATION]

Create an empty data contract.

╭─ Arguments ──────────────────────────────────────────────────────────────────────────────────────╮

│ location [LOCATION] The location of the data contract file to create. │

│ [default: datacontract.yaml] │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --template TEXT URL of a template or data contract │

│ --overwrite --no-overwrite Replace the existing datacontract.yaml │

│ [default: no-overwrite] │

│ --debug --no-debug Enable debug logging │

│ --help Show this message and exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract init datacontract.yaml

edit

Usage: datacontract edit [OPTIONS] [LOCATION]

Edit a data contract file in the Data Contract Editor (web UI).

Starts a local web server that opens the Data Contract Editor for the given file.

The editor is bundled with the CLI, so no internet access is required.

Saving in the editor writes directly back to the local file.

The server also acts as the editor's test runner: "Run test" in the editor executes

the data contract tests locally against the servers defined in the data contract.

Credentials for the data sources must be provided as environment variables, see

https://cli.datacontract.com/#test

╭─ Arguments ──────────────────────────────────────────────────────────────────────────────────────╮

│ location [LOCATION] The path of the data contract yaml to edit. If the file does not │

│ exist, you are asked whether to initialize a new data contract. │

│ [default: datacontract.yaml] │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --port INTEGER Bind socket to this port. [default: 4243] │

│ --host TEXT Bind socket to this host. Hint: For running in │

│ docker, set it to 0.0.0.0 │

│ [default: 127.0.0.1] │

│ --editor-version TEXT Version of the datacontract-editor npm package to │

│ load from the CDN, e.g. '0.1.9' or 'latest'. By │

│ default, the editor version bundled with the CLI │

│ is used (works offline). │

│ --editor-assets-url TEXT Base URL to load the Data Contract Editor assets │

│ (JS/CSS) from, e.g. a self-hosted editor build. │

│ Takes precedence over --editor-version. │

│ --open --no-open Open the editor in the default browser. │

│ [default: open] │

│ --debug --no-debug Enable debug logging │

│ --help Show this message and exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract edit datacontract.yaml

Requires the api extra (pip install 'datacontract-cli[api]').

The Data Contract Editor assets are loaded from a CDN by default; use --editor-version to pin a specific editor version or --editor-assets-url to point to a self-hosted build.

lint

Usage: datacontract lint [OPTIONS] [LOCATION]

Validate that the datacontract.yaml is correctly formatted.

╭─ Arguments ──────────────────────────────────────────────────────────────────────────────────────╮

│ location [LOCATION] The location (url or path) of the data contract yaml. │

│ [default: datacontract.yaml] │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --json-schema TEXT The location (url or path) of the │

│ ODCS JSON Schema │

│ --output PATH Specify the file path where the │

│ test results should be written to │

│ (e.g., │

│ './test-results/TEST-datacontrac… │

│ If no path is provided, the │

│ output will be printed to stdout. │

│ --output-format [json|junit] The target format for the test │

│ results. Accepted values: json, │

│ junit. │

│ --all-errors Report all JSON Schema validation │

│ errors instead of stopping after │

│ the first one. │

│ --inline-references --no-inline-references Resolve external references │

│ (currently: │

│ authoritativeDefinitions[type in │

│ {definition, semantics}]) in the │

│ contract and inline the fetched │

│ content from the configured │

│ entropy-data host. │

│ [default: inline-references] │

│ --debug --no-debug Enable debug logging │

│ --help Show this message and exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract lint datacontract.yaml

changelog

Usage: datacontract changelog [OPTIONS] V1 V2

Show a changelog between two data contracts.

╭─ Arguments ──────────────────────────────────────────────────────────────────────────────────────╮

│ * v1 TEXT The location (url or path) of the source (before) data contract YAML. │

│ [required] │

│ * v2 TEXT The location (url or path) of the target (after) data contract YAML. │

│ [required] │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --inline-references --no-inline-references Resolve external references (currently: │

│ authoritativeDefinitions[type in {definition, │

│ semantics}]) in the contract and inline the │

│ fetched content from the configured │

│ entropy-data host. │

│ [default: inline-references] │

│ --debug --no-debug Enable debug logging │

│ --help Show this message and exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract changelog datacontract-v1.yaml datacontract-v2.yaml

$ datacontract changelog v1.odcs.yaml v2.odcs.yaml

test

Usage: datacontract test [OPTIONS] [LOCATION]

Run schema and quality tests on configured servers.

╭─ Arguments ──────────────────────────────────────────────────────────────────────────────────────╮

│ location [LOCATION] The location (url or path) of the data contract yaml. │

│ [default: datacontract.yaml] │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --json-schema TEXT The location (url or │

│ path) of the ODCS JSON │

│ Schema │

│ --server TEXT The server configuration │

│ to run the schema and │

│ quality tests. Use the │

│ key of the server object │

│ in the data contract yaml │

│ file to refer to a │

│ server, e.g., │

│ `production`, or `all` │

│ for all servers │

│ (default). │

│ [default: all] │

│ --schema-name TEXT Which schema to test, │

│ e.g., `orders`, or `all` │

│ for all schemas │

│ (default). │

│ [default: all] │

│ --publish-test-results --no-publish-test-results Deprecated. Use publish │

│ parameter. Publish the │

│ results after the test │

│ [default: │

│ no-publish-test-results] │

│ --publish TEXT The url to publish the │

│ results after the test. │

│ --output PATH Specify the file path │

│ where the test results │

│ should be written to │

│ (e.g., │

│ './test-results/TEST-dat… │

│ --output-format [json|junit] The target format for the │

│ test results. Accepted │

│ values: json, junit. │

│ --checks TEXT Comma-separated list of │

│ check categories to run │

│ (available: schema, │

│ quality, servicelevel, │

│ custom). Omit to enable │

│ all. │

│ --include-failed-samples --no-include-failed-samp… Collect a small sample of │

│ rows that failed each │

│ missing/invalid/duplicate │

│ check (identifier + │

│ offending columns; │

│ sensitive columns │

│ omitted). Off by default. │

│ [default: │

│ no-include-failed-sample… │

│ --logs --no-logs Print logs │

│ [default: no-logs] │

│ --ssl-verification --no-ssl-verification SSL verification when │

│ publishing the data │

│ contract. │

│ [default: │

│ ssl-verification] │

│ --inline-references --no-inline-references Resolve external │

│ references (currently: │

│ authoritativeDefinitions… │

│ in {definition, │

│ semantics}]) in the │

│ contract and inline the │

│ fetched content from the │

│ configured entropy-data │

│ host. │

│ [default: │

│ inline-references] │

│ --debug --no-debug Enable debug logging │

│ --help Show this message and │

│ exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract test datacontract.yaml --server production

Data Contract CLI connects to a data source and runs schema and quality tests to verify that the data contract is valid.

$ datacontract test --server production datacontract.yaml

For CI/CD pipelines, see ci.

The application uses different engines, based on the server type.

Internally, it connects with DuckDB, Spark, or a native connection and executes most checks with ibis (compiling dialect-specific SQL per backend) and validates JSON with fastjsonschema.

Supported Data Sources

The server block in the datacontract.yaml is used to set up the connection.

In addition, credentials, such as username and passwords, are provided with environment variables.

Environment variables are also loaded from a .env file in the current working directory (or the nearest parent directory containing one, so you can run the CLI from a subfolder of your project). Already-set environment variables take precedence over values from the .env file.

# .env

DATACONTRACT_POSTGRES_USERNAME=postgres

DATACONTRACT_POSTGRES_PASSWORD=postgres

Feel free to create an issue, if you need support for additional types and formats.

S3

Data Contract CLI can test data that is stored in S3 buckets or any S3-compliant endpoints in various formats.

- CSV

- JSON

- Delta

- Parquet

- Iceberg (coming soon)

Examples

JSON

datacontract.yaml

servers:

production:

type: s3

endpointUrl: https://minio.example.com # not needed with AWS S3

location: s3://bucket-name/path/*/*.json

format: json

delimiter: new_line # new_line, array, or none

Delta Tables

datacontract.yaml

servers:

production:

type: s3

endpointUrl: https://minio.example.com # not needed with AWS S3

location: s3://bucket-name/path/table.delta # path to the Delta table folder containing parquet data files and the _delta_log

format: delta

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_S3_REGION |

eu-central-1 |

Region of S3 bucket |

DATACONTRACT_S3_ACCESS_KEY_ID |

AKIAXV5Q5QABCDEFGH |

AWS Access Key ID |

DATACONTRACT_S3_SECRET_ACCESS_KEY |

93S7LRrJcqLaaaa/XXXXXXXXXXXXX |

AWS Secret Access Key |

DATACONTRACT_S3_SESSION_TOKEN |

AQoDYXdzEJr... |

AWS temporary session token (optional) |

Athena

Data Contract CLI can test data in AWS Athena stored in S3. Supports different file formats, such as Iceberg, Parquet, JSON, CSV…

Example

datacontract.yaml

servers:

athena:

type: athena

catalog: awsdatacatalog # awsdatacatalog is the default setting

schema: icebergdemodb # in Athena, this is called "database"

regionName: eu-central-1

stagingDir: s3://my-bucket/athena-results/

models:

my_table: # corresponds to a table or view name

type: table

fields:

my_column_1: # corresponds to a column

type: string

config:

physicalType: varchar

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_S3_REGION |

eu-central-1 |

Region of Athena service |

DATACONTRACT_S3_ACCESS_KEY_ID |

AKIAXV5Q5QABCDEFGH |

AWS Access Key ID |

DATACONTRACT_S3_SECRET_ACCESS_KEY |

93S7LRrJcqLaaaa/XXXXXXXXXXXXX |

AWS Secret Access Key |

DATACONTRACT_S3_SESSION_TOKEN |

AQoDYXdzEJr... |

AWS temporary session token (optional) |

Google Cloud Storage (GCS)

The S3 integration also works with files on Google Cloud Storage through its interoperability.

Use https://storage.googleapis.com as the endpoint URL.

Example

datacontract.yaml

servers:

production:

type: s3

endpointUrl: https://storage.googleapis.com

location: s3://bucket-name/path/*/*.json # use s3:// schema instead of gs://

format: json

delimiter: new_line # new_line, array, or none

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_S3_ACCESS_KEY_ID |

GOOG1EZZZ... |

The GCS HMAC Key Key ID |

DATACONTRACT_S3_SECRET_ACCESS_KEY |

PDWWpb... |

The GCS HMAC Key Secret |

BigQuery

We support authentication to BigQuery using Service Account Key or Application Default Credentials (ADC). ADC supports Workload Identity Federation (WIF), GCE metadata server, and gcloud auth application-default login. The used Service Account should include the roles:

- BigQuery Job User

- BigQuery Data Viewer

When no DATACONTRACT_BIGQUERY_ACCOUNT_INFO_JSON_PATH is set, the CLI falls back to Application Default Credentials (ADC/WIF) automatically.

Example

datacontract.yaml

servers:

production:

type: bigquery

project: datameshexample-product

dataset: datacontract_cli_test_dataset

models:

datacontract_cli_test_table: # corresponds to a BigQuery table

type: table

fields: ...

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_BIGQUERY_ACCOUNT_INFO_JSON_PATH |

~/service-access-key.json |

Service Account key JSON file. If not set, ADC/WIF is used automatically. |

DATACONTRACT_BIGQUERY_IMPERSONATION_ACCOUNT |

[email protected] |

Optional. Service account to impersonate. Works with both key file and ADC auth. |

Azure

Data Contract CLI can test data that is stored in Azure Blob storage or Azure Data Lake Storage (Gen2) (ADLS) in various formats.

Example

datacontract.yaml

servers:

production:

type: azure

location: abfss://datameshdatabricksdemo.dfs.core.windows.net/inventory_events/*.parquet

format: parquet

Environment Variables

Authentication works with an Azure Service Principal (SPN) aka App Registration with a secret.

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_AZURE_TENANT_ID |

79f5b80f-10ff-40b9-9d1f-774b42d605fc |

The Azure Tenant ID |

DATACONTRACT_AZURE_CLIENT_ID |

3cf7ce49-e2e9-4cbc-a922-4328d4a58622 |

The ApplicationID / ClientID of the app registration |

DATACONTRACT_AZURE_CLIENT_SECRET |

yZK8Q~GWO1MMXXXXXXXXXXXXX |

The Client Secret value |

SQL Server

Data Contract CLI can test data in MS SQL Server (including Azure SQL, Synapse Analytics SQL Pool, and Microsoft Fabric).

Example

datacontract.yaml

servers:

production:

type: sqlserver

host: localhost

port: 5432

database: tempdb

schema: dbo

driver: ODBC Driver 18 for SQL Server

models:

my_table_1: # corresponds to a table

type: table

fields:

my_column_1: # corresponds to a column

type: varchar

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_SQLSERVER_AUTHENTICATION |

sql |

Supported: sql (default), cli (uses az login session), windows, ActiveDirectoryPassword, ActiveDirectoryServicePrincipal, ActiveDirectoryInteractive |

DATACONTRACT_SQLSERVER_USERNAME |

root |

Username (for sql, ActiveDirectoryPassword, ActiveDirectoryInteractive) |

DATACONTRACT_SQLSERVER_PASSWORD |

toor |

Password (for sql and ActiveDirectoryPassword) |

DATACONTRACT_SQLSERVER_CLIENT_ID |

a3cf5d29-b1a7-... |

Application/Client ID (for ActiveDirectoryServicePrincipal) |

DATACONTRACT_SQLSERVER_CLIENT_SECRET |

kX9~Qr2Lm.Tz4W... |

Client secret (for ActiveDirectoryServicePrincipal) |

DATACONTRACT_SQLSERVER_TRUST_SERVER_CERTIFICATE |

True |

Trust self-signed certificate |

DATACONTRACT_SQLSERVER_ENCRYPTED_CONNECTION |

True |

Use SSL |

DATACONTRACT_SQLSERVER_DRIVER |

ODBC Driver 18 for SQL Server |

ODBC driver name |

DATACONTRACT_SQLSERVER_TRUSTED_CONNECTION |

True |

Deprecated. Equivalent to AUTHENTICATION=windows |

The cli mode reuses an az login session through the Azure default credential chain and requires ODBC Driver 18.1 or newer.

Oracle

Data Contract CLI can test data in Oracle Database.

Example

datacontract.yaml

servers:

oracle:

type: oracle

host: localhost

port: 1521

service_name: ORCL

schema: ADMIN

models:

my_table_1: # corresponds to a table

type: table

fields:

my_column_1: # corresponds to a column

type: decimal

description: Decimal number

my_column_2: # corresponds to another column

type: text

description: Unicode text string

config:

oracleType: NVARCHAR2 # optional: can be used to explicitly define the type used in the database

# if not set a default mapping will be used

Environment Variables

These environment variable specify the credentials used by the datacontract tool to connect to the database.

If you’ve started the database from a container, e.g. oracle-free

this should match either system and what you specified as ORACLE_PASSWORD on the container or

alternatively what you’ve specified under APP_USER and APP_USER_PASSWORD.

If you require thick mode to connect to the database, you need to have an Oracle Instant Client

installed on the system and specify the path to the installation within the environment variable

DATACONTRACT_ORACLE_CLIENT_DIR.

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_ORACLE_USERNAME |

system |

Username |

DATACONTRACT_ORACLE_PASSWORD |

0x162e53 |

Password |

DATACONTRACT_ORACLE_CLIENT_DIR |

C:\oracle\client |

Path to Oracle Instant Client installation |

Databricks

Works with Unity Catalog and Hive metastore.

Needs a running SQL warehouse or compute cluster.

Example

datacontract.yaml

servers:

production:

type: databricks

catalog: acme_catalog_prod

schema: orders_latest

models:

orders: # corresponds to a table

type: table

fields: ...

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_DATABRICKS_SERVER_HOSTNAME |

dbc-abcdefgh-1234.cloud.databricks.com |

The host name of the SQL warehouse or compute cluster |

DATACONTRACT_DATABRICKS_HTTP_PATH |

/sql/1.0/warehouses/b053a3ffffffff |

The HTTP path to the SQL warehouse or compute cluster |

DATACONTRACT_DATABRICKS_TOKEN |

dapia00000000000000000000000000000 |

A personal access token (PAT) to authenticate |

DATACONTRACT_DATABRICKS_CLIENT_ID |

00000000-0000-0000-0000-000000000000 |

Service principal application (client) ID for OAuth machine-to-machine (M2M) auth |

DATACONTRACT_DATABRICKS_CLIENT_SECRET |

dose00000000000000000000000000000000 |

Service principal OAuth secret, used together with the client ID |

DATACONTRACT_DATABRICKS_PROFILE |

my-profile |

A profile from ~/.databrickscfg, delegating to the Databricks SDK unified auth (also resolves Azure CLI / managed identity) |

DATACONTRACT_DATABRICKS_AUTH_TYPE |

databricks-oauth |

Explicit connector auth type, e.g. databricks-oauth for the interactive user-to-machine (U2M) browser login |

The authentication method is selected from the variables you set, in this order:

a personal access token, then an OAuth service principal (CLIENT_ID + CLIENT_SECRET),

then a config profile, then an explicit AUTH_TYPE.

Databricks (programmatic)

Works with Unity Catalog and Hive metastore.

When running in a notebook or pipeline, the provided spark session can be used.

An additional authentication is not required.

Requires a Databricks Runtime with Python >= 3.10.

Example

datacontract.yaml

servers:

production:

type: databricks

host: dbc-abcdefgh-1234.cloud.databricks.com # ignored, always use current host

catalog: acme_catalog_prod

schema: orders_latest

models:

orders: # corresponds to a table

type: table

fields: ...

Installing on Databricks Compute

Important: When using Databricks LTS ML runtimes (15.4, 16.4), installing via %pip install in notebooks can cause issues.

Recommended approach: Use Databricks’ native library management instead:

- Create or configure your compute cluster:

- Navigate to Compute in the Databricks workspace

- Create a new cluster or select an existing one

- Go to the Libraries tab

- Add the datacontract-cli library:

- Click Install new

- Select PyPI as the library source

- Enter package name:

datacontract-cli[databricks] - Click Install

-

Restart the cluster to apply the library installation

- Use in your notebook without additional installation:

from datacontract.data_contract import DataContract data_contract = DataContract( data_contract_file="/Volumes/acme_catalog_prod/orders_latest/datacontract/datacontract.yaml", spark=spark) run = data_contract.test() run.result

Databricks’ library management properly resolves dependencies during cluster initialization, rather than at runtime in the notebook.

Dataframe (programmatic)

Works with Spark DataFrames. DataFrames need to be created as named temporary views. Multiple temporary views are supported if your data contract contains multiple models.

Testing DataFrames is useful to test your datasets in a pipeline before writing them to a data source.

Example

datacontract.yaml

servers:

production:

type: dataframe

models:

my_table: # corresponds to a temporary view

type: table

fields: ...

Example code

from datacontract.data_contract import DataContract

df.createOrReplaceTempView("my_table")

data_contract = DataContract(

data_contract_file="datacontract.yaml",

spark=spark,

)

run = data_contract.test()

assert run.result == "passed"

Snowflake

Data Contract CLI can test data in Snowflake.

Example

datacontract.yaml

servers:

snowflake:

type: snowflake

account: abcdefg-xn12345

database: ORDER_DB

schema: ORDERS_PII_V2

models:

my_table_1: # corresponds to a table

type: table

fields:

my_column_1: # corresponds to a column

type: varchar

Environment Variables

Any DATACONTRACT_SNOWFLAKE_-prefixed variable is passed (lowercased, prefix stripped) as a connection parameter to the snowflake-connector-python driver.

Depending on the authenticator mode required by your Snowflake workspace, please set your environment variables accordingly.

For example:

| Soda parameter | Environment Variable |

|---|---|

username |

DATACONTRACT_SNOWFLAKE_USERNAME |

password |

DATACONTRACT_SNOWFLAKE_PASSWORD |

warehouse |

DATACONTRACT_SNOWFLAKE_WAREHOUSE |

role |

DATACONTRACT_SNOWFLAKE_ROLE |

connection_timeout |

DATACONTRACT_SNOWFLAKE_CONNECTION_TIMEOUT |

authenticator |

DATACONTRACT_SNOWFLAKE_AUTHENTICATOR |

private_key |

DATACONTRACT_SNOWFLAKE_PRIVATE_KEY |

private_key_passphrase |

DATACONTRACT_SNOWFLAKE_PRIVATE_KEY_PASSPHRASE |

private_key_path |

DATACONTRACT_SNOWFLAKE_PRIVATE_KEY_PATH |

Beware, that parameters:

accountdatabaseschema

are obtained from the servers section of the YAML-file.

E.g. from the example above:

servers:

snowflake:

account: abcdefg-xn12345

database: ORDER_DB

schema: ORDERS_PII_V2

Kafka

Kafka support is currently considered experimental.

Example

datacontract.yaml

servers:

production:

type: kafka

host: abc-12345.eu-central-1.aws.confluent.cloud:9092

topic: my-topic-name

format: json

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_KAFKA_SASL_USERNAME |

xxx |

The SASL username (key). |

DATACONTRACT_KAFKA_SASL_PASSWORD |

xxx |

The SASL password (secret). |

DATACONTRACT_KAFKA_SASL_MECHANISM |

PLAIN |

Default PLAIN. Other supported mechanisms: SCRAM-SHA-256 and SCRAM-SHA-512 |

Postgres

Data Contract CLI can test data in Postgres or Postgres-compliant databases (e.g., RisingWave).

Example

datacontract.yaml

servers:

postgres:

type: postgres

host: localhost

port: 5432

database: postgres

schema: public

models:

my_table_1: # corresponds to a table

type: table

fields:

my_column_1: # corresponds to a column

type: varchar

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_POSTGRES_USERNAME |

postgres |

Username |

DATACONTRACT_POSTGRES_PASSWORD |

mysecretpassword |

Password |

Amazon Redshift

Data Contract CLI can test data in Amazon Redshift (both provisioned clusters and Redshift Serverless).

Example

datacontract.yaml

servers:

redshift:

type: redshift

host: my-workgroup.123456789012.us-east-1.redshift-serverless.amazonaws.com

port: 5439

database: dev

schema: analytics

models:

my_table_1: # corresponds to a table

type: table

fields:

my_column_1: # corresponds to a column

type: varchar

Environment Variables

Redshift is reached over the PostgreSQL wire protocol via the ibis Postgres backend, using username/password authentication.

| Connection parameter | Environment Variable |

|---|---|

user |

DATACONTRACT_REDSHIFT_USERNAME |

password |

DATACONTRACT_REDSHIFT_PASSWORD |

Note: IAM-based authentication (region / access key / role ARN) is not currently supported for Redshift, because ibis connects through the generic Postgres backend rather than a Redshift-specific driver.

MySQL

Data Contract CLI can test data in MySQL or MySQL-compliant databases (e.g., MariaDB).

Example

datacontract.yaml

servers:

mysql:

type: mysql

host: localhost

port: 3306

database: mydb

models:

my_table_1: # corresponds to a table

type: table

fields:

my_column_1: # corresponds to a column

type: varchar

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_MYSQL_USERNAME |

root |

Username |

DATACONTRACT_MYSQL_PASSWORD |

mysecretpassword |

Password |

Trino

Data Contract CLI can test data in Trino.

Example

datacontract.yaml

servers:

trino:

type: trino

host: localhost

port: 8080

catalog: my_catalog

schema: my_schema

models:

my_table_1: # corresponds to a table

type: table

fields:

my_column_1: # corresponds to a column

type: varchar

my_column_2: # corresponds to a column with custom trino type

type: object

config:

trinoType: row(en_us varchar, pt_br varchar)

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_TRINO_USERNAME |

trino |

Username |

DATACONTRACT_TRINO_PASSWORD |

mysecretpassword |

Password |

Impala

Data Contract CLI can run checks against an Apache Impala cluster.

Example

datacontract.yaml

servers:

impala:

type: impala

host: my-impala-host

port: 443

# Optional default database used for the checks

database: my_database

models:

my_table_1: # corresponds to a table

type: table

# fields as usual …

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_IMPALA_USERNAME |

analytics_user |

Username used to connect to Impala |

DATACONTRACT_IMPALA_PASSWORD |

mysecretpassword |

Password for the Impala user |

DATACONTRACT_IMPALA_USE_SSL |

true |

Whether to use SSL; defaults to true if unset |

DATACONTRACT_IMPALA_AUTH_MECHANISM |

LDAP |

Authentication mechanism; defaults to LDAP |

DATACONTRACT_IMPALA_USE_HTTP_TRANSPORT |

true |

Whether to use the HTTP transport; defaults to true |

DATACONTRACT_IMPALA_HTTP_PATH |

cliservice |

HTTP path for the Impala service; defaults to cliservice |

Type-mapping note (logicalType → Impala type)

If physicalType is not specified in the schema, we recommend the following mapping from logicalType to Impala column types:

| logicalType | Recommended Impala type |

|---|---|

integer |

INT or BIGINT |

number |

DOUBLE/decimal(..) |

string |

STRING or VARCHAR |

boolean |

BOOLEAN |

date |

DATE |

datetime |

TIMESTAMP |

This keeps the Impala schema compatible with the expectations of the checks generated by datacontract-cli.

API

Data Contract CLI can test APIs that return data in JSON format. Currently, only GET requests are supported.

Example

datacontract.yaml

servers:

api:

type: "api"

location: "https://api.example.com/path"

delimiter: none # new_line, array, or none (default)

models:

my_object: # corresponds to the root element of the JSON response

type: object

fields:

field1:

type: string

fields2:

type: number

Environment Variables

| Environment Variable | Example | Description |

|---|---|---|

DATACONTRACT_API_HEADER_AUTHORIZATION |

Bearer <token> |

The value for the authorization header. Optional. |

Local

Data Contract CLI can test local files in parquet, json, csv, or delta format.

Example

datacontract.yaml

servers:

local:

type: local

path: ./*.parquet

format: parquet

models:

my_table_1: # corresponds to a table

type: table

fields:

my_column_1: # corresponds to a column

type: varchar

my_column_2: # corresponds to a column

type: string

dbt sync

The

dbt synccommand is still work in progress and will receive further functionality and documentation soon. ```

Usage: datacontract dbt sync [OPTIONS] [CONTRACT]

Generate dbt tests from an ODCS contract and run them.

Within the specified dbt project, this command wipes <model-paths>/datacontract_cli/ and

<test-paths>/datacontract_cli/, regenerates them from the contract, then runs dbt test.

╭─ Arguments ──────────────────────────────────────────────────────────────────────────────────────╮

│ contract [CONTRACT] Path to the ODCS data contract. If omitted, searches for a single │

│ *.odcs.yaml in the current directory and its subdirectories. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ –project-dir PATH Path to the dbt project root │

│ (must contain │

│ dbt_project.yml). Defaults │

│ to the current directory. │

│ –schema-name TEXT Which ODCS schema object to │

│ sync, by name. │

│ [default: all] │

│ –model-resolution [name|physicalName] How to map an ODCS schema to │

│ a dbt model name. │

│ [default: name] │

│ –target TEXT Forwarded to dbt test │

│ --target. │

│ –profiles-dir PATH Forwarded to dbt test │

│ --profiles-dir. │

│ –skip-tests –run-tests Generate tests but skip │

│ running dbt test. │

│ [default: run-tests] │

│ –publish TEXT The url to publish the │

│ results after the test. │

│ –server TEXT ODCS server name for │

│ published test results. │

│ Auto-selected if the │

│ contract contains only one │

│ server. Otherwise defaults │

│ to –target. │

│ –ssl-verification –no-ssl-verification SSL verification when │

│ publishing test results. │

│ [default: ssl-verification] │

│ –debug –no-debug Enable debug logging │

│ –help Show this message and exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract dbt sync orders.odcs.yaml –project-dir ./warehouse

`datacontract dbt sync` generates dbt tests from an ODCS data contract directly into your dbt project and (by default) runs `dbt test` against them. The contract becomes the single source of truth for column-level constraints and quality checks. It is recommended to remove existing dbt tests to avoid duplication.

On each run, the command:

- **Wipes and regenerates** the `models/datacontract_cli/` and `tests/datacontract_cli/` directories under your dbt project. The paths honor `model-paths` and `test-paths` in `dbt_project.yml`.

- **Emits one YAML model file per ODCS schema** that uses dbt's built-in tests and [`dbt_utils`](https://github.com/dbt-labs/dbt-utils).

- **Emits singular SQL tests** for all ODCS `quality` that can't be expressed as native YAML tests.

- **Runs `dbt test --select tag:datacontract_cli`** to run the generated tests; pre-existing dbt tests are untouched. Pass `--skip-tests` to regenerate without invoking dbt.

Prerequisites: `dbt-core` plus an adapter (e.g. `dbt-duckdb`, `dbt-postgres`) on `PATH`, [`dbt_utils`](https://github.com/dbt-labs/dbt-utils) installed in your dbt project's `packages.yml`.

```bash

# Auto-discover a contract named *.odcs.yaml in a dbt project

$ datacontract dbt sync

# Explicit contract, run against a specific dbt target

$ datacontract dbt sync orders.odcs.yaml --project-dir ./warehouse --target dev

# Only generate dbt tests, don't run them

$ datacontract dbt sync orders.odcs.yaml --skip-tests

# Run and publish results to an Entropy Data instance

$ datacontract dbt sync orders.odcs.yaml --publish https://api.entropy-data.com/api/test-results

ci

Usage: datacontract ci [OPTIONS] [LOCATIONS]...

Run tests for CI/CD pipelines. Emits GitHub Actions annotations and step summary.

╭─ Arguments ──────────────────────────────────────────────────────────────────────────────────────╮

│ locations [LOCATIONS]... The location(s) (url or path) of the data contract yaml │

│ file(s). │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --json-schema TEXT The location (url or │

│ path) of the ODCS JSON │

│ Schema │

│ --server TEXT The server configuration │

│ to run the schema and │

│ quality tests. Use the │

│ key of the server object │

│ in the data contract │

│ yaml file to refer to a │

│ server, e.g., │

│ `production`, or `all` │

│ for all servers │

│ (default). │

│ [default: all] │

│ --publish TEXT The url to publish the │

│ results after the test. │

│ --output PATH Specify the file path │

│ where the test results │

│ should be written to │

│ (e.g., │

│ './test-results/TEST-da… │

│ --output-format [json|junit] The target format for │

│ the test results. │

│ Accepted values: json, │

│ junit. │

│ --logs --no-logs Print logs │

│ [default: no-logs] │

│ --json Print test results as │

│ JSON to stdout. │

│ --fail-on [warning|error|never] Minimum severity that │

│ causes a non-zero exit │

│ code. │

│ [default: error] │

│ --ssl-verification --no-ssl-verification SSL verification when │

│ publishing the data │

│ contract. │

│ [default: │

│ ssl-verification] │

│ --inline-references --no-inline-references Resolve external │

│ references (currently: │

│ authoritativeDefinition… │

│ in {definition, │

│ semantics}]) in the │

│ contract and inline the │

│ fetched content from the │

│ configured entropy-data │

│ host. │

│ [default: │

│ inline-references] │

│ --debug --no-debug Enable debug logging │

│ --help Show this message and │

│ exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract ci datacontract.yaml --output test-results.xml --output-format junit

The ci command wraps test with CI/CD-specific features:

- Multiple contracts:

datacontract ci contracts/*.yaml - CI annotations: Inline annotations for failed checks (GitHub Actions and Azure DevOps)

- Markdown summary of the test results (GitHub Actions)

--json: Print test results as JSON to stdout for machine-readable output--fail-on: Control the minimum severity that causes a non-zero exit code. Default iserror; set towarningto also fail on warnings, orneverto always exit 0.

The supported server types and their configuration are equivalent to the test command.

# Single contract

$ datacontract ci datacontract.yaml

# Multiple contracts

$ datacontract ci contracts/*.yaml

# Fail on warnings too

$ datacontract ci --fail-on warning datacontract.yaml

# JSON output for scripting

$ datacontract ci --json datacontract.yaml

GitHub Actions workflow example

# .github/workflows/datacontract.yml

name: Data Contract CI

on:

push:

branches: [main]

pull_request:

jobs:

datacontract-ci:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.11"

- run: pip install datacontract-cli

# Test one or more data contracts (supports globs, e.g. contracts/*.yaml)

- run: datacontract ci datacontract.yaml

Azure DevOps pipeline example

# azure-pipelines.yml

trigger:

branches:

include:

- main

pool:

vmImage: "ubuntu-latest"

steps:

- task: UsePythonVersion@0

inputs:

versionSpec: "3.11"

- script: pip install datacontract-cli

displayName: "Install datacontract-cli"

# Test one or more data contracts (supports globs, e.g. contracts/*.yaml)

- script: datacontract ci datacontract.yaml

displayName: "Run data contract tests"

export

Usage: datacontract export [OPTIONS] COMMAND [ARGS]...

Convert a data contract to a target format.

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --help Show this message and exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

╭─ Commands ───────────────────────────────────────────────────────────────────────────────────────╮

│ sql Export a data contract to SQL DDL. │

│ sql-query Export a data contract to a SQL query. │

│ dbt-models Export a data contract to dbt model schema YAML. │

│ dbt-sources Export a data contract to dbt sources YAML. │

│ dbt-staging-sql Export a data contract to a dbt staging SQL file. │

│ avro Export a data contract to Avro schema. │

│ avro-idl Export a data contract to Avro IDL. │

│ jsonschema Export a data contract to JSON Schema. │

│ pydantic-model Export a data contract to a Pydantic model. │

│ protobuf Export a data contract to Protobuf schema. │

│ odcs Export a data contract to ODCS format. │

│ rdf Export a data contract to RDF. │

│ html Export a data contract to HTML. │

│ markdown Export a data contract to Markdown. │

│ mermaid Export a data contract to Mermaid diagram. │

│ bigquery Export a data contract to BigQuery schema. │

│ dbml Export a data contract to DBML. │

│ go Export a data contract to Go structs. │

│ spark Export a data contract to Spark schema. │

│ sqlalchemy Export a data contract to SQLAlchemy models. │

│ iceberg Export a data contract to Iceberg schema. │

│ sodacl Export a data contract to SodaCL checks. │

│ great-expectations Export a data contract to Great Expectations suite. │

│ data-caterer Export a data contract to Data Caterer format. │

│ dcs Export a data contract to DCS format. │

│ dqx Export a data contract to DQX format. │

│ excel Export a data contract to Excel. │

│ custom Export a data contract using a custom Jinja template. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract export html datacontract.yaml --output datacontract.html

For SQL dialects (postgres, mysql, snowflake, databricks, sqlserver, trino, oracle), use

`datacontract export sql --dialect <dialect>`.

Run datacontract export <format> --help to see the format-specific options (e.g. datacontract export sql --help). If you are missing a format, please create an issue on GitHub.

Examples

# Example export data contract as HTML

datacontract export html --output datacontract.html

SQL

The export function converts a given data contract into a SQL data definition language (DDL).

datacontract export sql datacontract.yaml --output output.sql

The SQL dialect is determined from the servers block in the data contract (e.g. type: postgres, type: snowflake). Alternatively, pass it explicitly:

datacontract export sql datacontract.yaml --dialect postgres --output output.sql

If using Databricks and an error is thrown when deploying SQL DDLs with variant columns, set the following property.

spark.conf.set(“spark.databricks.delta.schema.typeCheck.enabled”, “false”)

Great Expectations

The export function transforms a specified data contract into a comprehensive Great Expectations JSON suite.

If the contract includes multiple models, you need to specify the names of the schema/models you wish to export.

datacontract export great-expectations datacontract.yaml --schema-name orders

The export creates a list of expectations by utilizing:

- The data from the Model definition with a fixed mapping

- The expectations provided in the quality field for each model (find here the expectations gallery: Great Expectations Gallery)

Additional Arguments

To further customize the export, the following optional arguments are available:

-

suite_name: The name of the expectation suite. This suite groups all generated expectations and provides a convenient identifier within Great Expectations. If not provided, a default suite name will be generated based on the model name(s). engine: Specifies the engine used to run Great Expectations checks. Accepted values are:pandas— Use this when working with in-memory data frames through the Pandas library.spark— Use this for working with Spark dataframes.sql— Use this for working with SQL databases.

-

sql_server_type: Specifies the type of SQL server to connect with whenengineis set tosql.Providing

sql_server_typeensures that the appropriate SQL dialect and connection settings are applied during the expectation validation.

RDF

The export function converts a given data contract into a RDF representation. You have the option to

add a base_url which will be used as the default prefix to resolve relative IRIs inside the document.

datacontract export rdf --base https://www.example.com/ datacontract.yaml

The data contract is mapped onto the following concepts of a yet to be defined Data Contract Ontology named https://datacontract.com/DataContractSpecification/ :

- DataContract

- Server

- Model

Having the data contract inside an RDF Graph gives us access the following use cases:

- Interoperability with other data contract specification formats

- Store data contracts inside a knowledge graph

- Enhance a semantic search to find and retrieve data contracts

- Linking model elements to already established ontologies and knowledge

- Using full power of OWL to reason about the graph structure of data contracts

- Apply graph algorithms on multiple data contracts (Find similar data contracts, find “gatekeeper” data products, find the true domain owner of a field attribute)

DBML

The export function converts the logical data types of the datacontract into the specific ones of a concrete Database

if a server is selected via the --server option (based on the type of that server). If no server is selected, the

logical data types are exported.

DBT & DBT-SOURCES

The export function converts the datacontract to dbt models in YAML format, with support for SQL dialects.

If a server is selected via the --server option (based on the type of that server) then the DBT column data_types match the expected data types of the server.

If no server is selected, then it defaults to snowflake.

Spark

The export function converts the data contract specification into a StructType Spark schema. The returned value is a Python code picture of the model schemas. Spark DataFrame schema is defined as StructType. For more details about Spark Data Types please see the spark documentation

Avro

The export function converts the data contract specification into an avro schema. It supports specifying custom avro properties for logicalTypes and default values.

Custom Avro Properties

We support a config map on field level. A config map may include any additional key-value pairs and support multiple server type bindings.

To specify custom Avro properties in your data contract, you can define them within the config section of your field definition. Below is an example of how to structure your YAML configuration to include custom Avro properties, such as avroLogicalType and avroDefault.

NOTE: At this moment, we just support logicalType and default

Example Configuration

models:

orders:

fields:

my_field_1:

description: Example for AVRO with Timestamp (microsecond precision) https://avro.apache.org/docs/current/spec.html#Local+timestamp+%28microsecond+precision%29

type: long

example: 1672534861000000 # Equivalent to 2023-01-01 01:01:01 in microseconds

required: true

config:

avroLogicalType: local-timestamp-micros

avroDefault: 1672534861000000

Explanation

- models: The top-level key that contains different models (tables or objects) in your data contract.

- orders: A specific model name. Replace this with the name of your model.

- fields: The fields within the model. Each field can have various properties defined.

- my_field_1: The name of a specific field. Replace this with your field name.

- description: A textual description of the field.

- type: The data type of the field. In this example, it is

long. - example: An example value for the field.

- required: Is this a required field (as opposed to optional/nullable).

- config: Section to specify custom Avro properties.

- avroLogicalType: Specifies the logical type of the field in Avro. In this example, it is

local-timestamp-micros. - avroDefault: Specifies the default value for the field in Avro. In this example, it is 1672534861000000 which corresponds to ` 2023-01-01 01:01:01 UTC`.

- avroLogicalType: Specifies the logical type of the field in Avro. In this example, it is

Data Caterer

The export function converts the data contract to a data generation task in YAML format that can be ingested by Data Caterer. This gives you the ability to generate production-like data in any environment based off your data contract.

datacontract export data-caterer datacontract.yaml --schema-name orders

You can further customise the way data is generated via adding additional metadata in the YAML to suit your needs.

Iceberg

Exports to an Iceberg Table Json Schema Definition.

This export only supports a single model export at a time because Iceberg’s schema definition is for a single table and the exporter maps 1 model to 1 table, use the --schema-name flag

to limit your contract export to a single model.

$ datacontract export iceberg --schema-name orders https://datacontract.com/examples/orders-latest/datacontract.yaml --output /tmp/orders_iceberg.json

$ cat /tmp/orders_iceberg.json | jq '.'

{

"type": "struct",

"fields": [

{

"id": 1,

"name": "order_id",

"type": "string",

"required": true

},

{

"id": 2,

"name": "order_timestamp",

"type": "timestamptz",

"required": true

},

{

"id": 3,

"name": "order_total",

"type": "long",

"required": true

},

{

"id": 4,

"name": "customer_id",

"type": "string",

"required": false

},

{

"id": 5,

"name": "customer_email_address",

"type": "string",

"required": true

},

{

"id": 6,

"name": "processed_timestamp",

"type": "timestamptz",

"required": true

}

],

"schema-id": 0,

"identifier-field-ids": [

1

]

}

Custom

The export function converts the data contract specification into the custom format with Jinja. You can specify the path to a Jinja template with the --template argument, allowing you to output files in any format.

datacontract export custom --template template.txt datacontract.yaml

Jinja templates & variables

You can directly use the ODCS (Open Data Contract Standard) object as a template variable. data_contract is an OpenDataContractStandard instance; top-level fields include name, id, version, schema_ (the list of schemas), servers, team, etc.

$ cat template.txt

title: {{ data_contract.name }}

schemas:

{%- for schema in data_contract.schema_ %}

- name: {{ schema.name }}

{%- endfor %}

$ datacontract export custom --template template.txt datacontract.yaml

title: Orders Latest

schemas:

- name: orders

Example Jinja Templates for a customized dbt model

You can export a given dbt model containing any logic by adding the schema-name filter/parameter (in ODCS, “schemas” are the equivalent of “models” in dbt).

It adds jinja variable passed to your template.file:

schema_name: strschema: SchemaObject from ODCS

Below is an example of a dbt staging layer that converts a field of type: timestamp to a DATETIME type with time zone conversion.

-

template.sqlSELECT {%- for field in schema.properties %} {%- if field.physicalType == "timestamp" %} DATETIME({{ field.name }}, "Asia/Tokyo") AS {{ field.name }}, {%- else %} {{ field.name }} AS {{ field.name }}, {%- endif %} {%- endfor %} FROM {{ "{{" }} ref('{{ schema_name }}') {{ "}}" }} - export command

datacontract export custom datacontract.odcs.yaml --template template.sql --schema-name orders -

output.sqlSELECT order_id AS order_id, DATETIME(order_timestamp, "Asia/Tokyo") AS order_timestamp, order_total AS order_total, customer_id AS customer_id, customer_email_address AS customer_email_address, DATETIME(processed_timestamp, "Asia/Tokyo") AS processed_timestamp, FROM {{ ref('orders') }}

ODCS Excel Template

The export function converts a data contract into an ODCS (Open Data Contract Standard) Excel template. This creates a user-friendly Excel spreadsheet that can be used for authoring, sharing, and managing data contracts using the familiar Excel interface.

datacontract export excel --output datacontract.xlsx datacontract.yaml

The Excel format enables:

- User-friendly authoring: Create and edit data contracts in Excel’s familiar interface

- Easy sharing: Distribute data contracts as standard Excel files

- Collaboration: Enable non-technical stakeholders to contribute to data contract definitions

- Round-trip conversion: Import Excel templates back to YAML data contracts

For more information about the Excel template structure, visit the ODCS Excel Template repository.

import

Usage: datacontract import [OPTIONS] COMMAND [ARGS]...

Create a data contract from a source format.

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --help Show this message and exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

╭─ Commands ───────────────────────────────────────────────────────────────────────────────────────╮

│ dbt Import a data contract from a dbt manifest file. │

│ sql Import a data contract from a SQL DDL file. │

│ avro Import a data contract from an Avro schema file. │

│ dbml Import a data contract from a DBML file. │

│ glue Import a data contract from AWS Glue. │

│ bigquery Import a data contract from BigQuery. │

│ unity Import a data contract from Databricks Unity Catalog. │

│ jsonschema Import a data contract from a JSON Schema file. │

│ json Import a data contract from a JSON file. │

│ odcs Import a data contract from an ODCS file. │

│ parquet Import a data contract from a Parquet file. │

│ csv Import a data contract from a CSV file. │

│ protobuf Import a data contract from a Protobuf schema file. │

│ spark Import a data contract from a Spark schema. │

│ iceberg Import a data contract from an Iceberg schema. │

│ excel Import a data contract from an Excel file. │

│ snowflake Import a data contract from a Snowflake workspace. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract import sql --source ddl.sql --dialect postgres --output datacontract.yaml

Run datacontract import <format> --help to see the format-specific options (e.g. datacontract import sql --help). If you are missing a format, please create an issue on GitHub.

Examples

# Example import from SQL DDL

datacontract import sql --source my_ddl.sql --dialect postgres

# To save to file

datacontract import sql --source my_ddl.sql --dialect postgres --output datacontract.yaml

BigQuery

BigQuery data can either be imported off of JSON Files generated from the table descriptions or directly from the Bigquery API. In case you want to use JSON Files, specify the source parameter with a path to the JSON File.

To import from the Bigquery API, you have to omit source and instead need to provide --project and --dataset. Additionally you may specify --table to enumerate the tables that should be imported. If no tables are given, all available tables of the dataset will be imported.

For providing authentication to the Client, please see the google documentation or the one about authorizing client libraries.

Examples:

# Example import from Bigquery JSON

datacontract import bigquery --source my_bigquery_table.json

# Example import from Bigquery API with specifying the tables to import

datacontract import bigquery --project <project_id> --dataset <dataset_id> --table <tableid_1> --table <tableid_2> --table <tableid_3>

# Example import from Bigquery API importing all tables in the dataset

datacontract import bigquery --project <project_id> --dataset <dataset_id>

Unity Catalog

# Example import from a Unity Catalog JSON file

datacontract import unity --source my_unity_table.json

# Example import single table from Unity Catalog via HTTP endpoint using PAT

export DATACONTRACT_DATABRICKS_SERVER_HOSTNAME="https://xyz.cloud.databricks.com"

export DATACONTRACT_DATABRICKS_TOKEN=<token>

datacontract import unity --table <table_full_name>

Please refer to Databricks documentation on how to set up a profile

# Example import single table from Unity Catalog via HTTP endpoint using Profile

export DATACONTRACT_DATABRICKS_PROFILE="my-profile"

datacontract import unity --table <table_full_name>

dbt

Importing from dbt manifest file.

You may give the --model parameter to enumerate the tables that should be imported. If no tables are given, all available tables of the database will be imported.

For versioned dbt models, use the name.vN convention to target a specific version. Omitting the version suffix imports all versions of that model.

Examples:

# Example import from dbt manifest with specifying the tables to import

datacontract import dbt --source <manifest_path> --model <model_name_1> --model <model_name_2> --model <model_name_3>

# Example import from dbt manifest importing all tables in the database

datacontract import dbt --source <manifest_path>

Excel

Importing from ODCS Excel Template.

Examples:

# Example import from ODCS Excel Template

datacontract import excel --source odcs.xlsx

Glue

Importing from Glue reads the necessary Data directly off of the AWS API.

You may give the --table parameter to enumerate the tables that should be imported. If no tables are given, all available tables of the database will be imported.

Examples:

# Example import from AWS Glue with specifying the tables to import

datacontract import glue --database <database_name> --table <table_name_1> --table <table_name_2> --table <table_name_3>

# Example import from AWS Glue importing all tables in the database

datacontract import glue --database <database_name>

Spark

Importing from Spark table or view these must be created or accessible in the Spark context. Specify tables list in the --tables option. If the tables are registered in Databricks and have a table-level description, it will also be added to the Data Contract Specification.

# Example: Import Spark table(s) from Spark context

datacontract import spark --tables "users,orders"

# Example: Import Spark table

DataContract.import_from_source("spark", "users")

DataContract.import_from_source(format = "spark", source = "users")

# Example: Import Spark dataframe

DataContract.import_from_source("spark", "users", dataframe = df_user)

DataContract.import_from_source(format = "spark", source = "users", dataframe = df_user)

# Example: Import Spark table + table description

DataContract.import_from_source("spark", "users", description = "description")

DataContract.import_from_source(format = "spark", source = "users", description = "description")

# Example: Import Spark dataframe + table description

DataContract.import_from_source("spark", "users", dataframe = df_user, description = "description")

DataContract.import_from_source(format = "spark", source = "users", dataframe = df_user, description = "description")

DBML

Importing from DBML Documents. NOTE: Since DBML does not have strict requirements on the types of columns, this import may create non-valid datacontracts, as not all types of fields can be properly mapped. In this case you will have to adapt the generated document manually. We also assume, that the description for models and fields is stored in a Note within the DBML model.

You may give the --table or --schema parameter to enumerate the tables or schemas that should be imported.

If no tables are given, all available tables of the source will be imported. Likewise, if no schema is given, all schemas are imported.

Examples:

# Example import from DBML file, importing everything

datacontract import dbml --source <file_path>

# Example import from DBML file, filtering for tables from specific schemas

datacontract import dbml --source <file_path> --schema <schema_1> --schema <schema_2>

# Example import from DBML file, filtering for tables with specific names

datacontract import dbml --source <file_path> --table <table_name_1> --table <table_name_2>

# Example import from DBML file, filtering for tables with specific names from a specific schema

datacontract import dbml --source <file_path> --table <table_name_1> --schema <schema_1>

Iceberg

Importing from an Iceberg Table Json Schema Definition. Specify location of json files using the source parameter.

Examples:

datacontract import iceberg --source ./tests/fixtures/iceberg/simple_schema.json --table test-table

CSV

Importing from CSV File. Specify file in source parameter. It does autodetection for encoding and csv dialect

Example:

datacontract import csv --source "test.csv"

protobuf

Importing from protobuf File. Specify file in source parameter.

.proto files are parsed with a pure-Python parser, so no protoc compiler or

other system dependency is required. Transitive import statements (including

across subdirectories) are resolved automatically.

Example:

datacontract import protobuf --source "test.proto"

snowflake

Importing from snowflake schema. Specify snowflake workspace account in source parameter, database name database and schema in schema.

Multiple authentification are supported,

login/password using the DATACONTRACT_SNOWFLAKE_ ... test environement variable are setup,

MFA using external browser is selected when DATACONTRACT_SNOWFLAKE_PASSWORD is missing

TOML file authentification using the default profile when SNOWFLAKE_DEFAULT_CONNECTION_NAME environment variable is defined

Example:

datacontract import snowflake --source account.canada-central.azure --database databaseName --schema schemaName

catalog

Usage: datacontract catalog [OPTIONS]

Create a html catalog of data contracts.

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --files TEXT Glob pattern for the data contract files to include in the │

│ catalog. Applies recursively to any subfolders. │

│ [default: *.yaml] │

│ --output TEXT Output directory for the catalog html files. │

│ [default: catalog/] │

│ --json-schema TEXT The location (url or path) of the ODCS JSON Schema │

│ --debug --no-debug Enable debug logging │

│ --help Show this message and exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract catalog --files "**/*.yaml" --output catalog/

Examples:

# create a catalog right in the current folder

datacontract catalog --output "."

# Create a catalog based on a filename convention

datacontract catalog --files "*.odcs.yaml"

publish

Usage: datacontract publish [OPTIONS] [LOCATION]

Publish the data contract to the Entropy Data.

╭─ Arguments ──────────────────────────────────────────────────────────────────────────────────────╮

│ location [LOCATION] The location (url or path) of the data contract yaml. │

│ [default: datacontract.yaml] │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --json-schema TEXT The location (url or path) of the ODCS JSON │

│ Schema │

│ --ssl-verification --no-ssl-verification SSL verification when publishing the data │

│ contract. │

│ [default: ssl-verification] │

│ --debug --no-debug Enable debug logging │

│ --help Show this message and exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract publish datacontract.yaml

api

Usage: datacontract api [OPTIONS]

Start the datacontract CLI as server application with REST API.

The OpenAPI documentation as Swagger UI is available on http://localhost:4242.

You can execute the commands directly from the Swagger UI.

To protect the API, you can set the environment variable DATACONTRACT_CLI_API_KEY to a secret API

key.

To authenticate, requests must include the header 'x-api-key' with the correct API key.

This is highly recommended, as data contract tests may be subject to SQL injections or leak

sensitive information.

To connect to servers (such as a Snowflake data source), set the credentials as environment

variables as documented in

https://cli.datacontract.com/#test

It is possible to run the API with extra arguments for `uvicorn.run()` as keyword arguments, e.g.:

`datacontract api --port 1234 --root_path /datacontract`.

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────╮

│ --port INTEGER Bind socket to this port. [default: 4242] │

│ --host TEXT Bind socket to this host. Hint: For running in docker, set it │

│ to 0.0.0.0 │

│ [default: 127.0.0.1] │

│ --debug --no-debug Enable debug logging │

│ --help Show this message and exit. │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

Example: datacontract api --port 4242 --host 0.0.0.0

Integrations

| Integration | Option | Description |

|---|---|---|

| Entropy Data | --publish |

Push full results to the Entropy Data API |

Integration with Entropy Data

If you use Entropy Data, you can use the data contract URL to reference to the contract and append the --publish option to send and display the test results. Set an environment variable for your API key.